Multicore and MPI-Start

MPI-Start uses by default the exact number of processes as slots allocated by the batch system, independently of the node they are located. While this behavior is fine for simple MPI applications, hybrid applications need better control of the location and number of processes to use while running.

MPI-Start 1.0.4

Since mpi-start 1.0.4, users are able to select the following placement policies:

- use a process per allocated slot (default),

- specify the total number of processes to start, or

- specify the number of processes to start at each node

Being the last one the most appropriate for hybrid applications. The typical use case would be starting 1 single process per node and at each node start as many threads as allocated slots are available within that node.

Total Number of Processes

In order to specify the total number of processes to use, mpi-start provides the -np option or the I2G_MPI_NP environment variable. Both methods are equivalent.

For example to start an application using exactly 12 processes (independently of the allocated slots):

or using the newer syntax:

mpi-start -t openmpi -np 12 myapp arg1 arg2

The location of the processes is determined by the MPI implementation that you are using. Normally a round-robin policy is followed!

Processes per Host

Exclusive Execution

It is convenient to run your applications without sharing the Worker Nodes with other applications. Otherwise they can suffer for bad performance. In order to do so, in EMI CREAM you can use WholeNodes=True in your JDL.

As with the total number of processes, both command line switches and environment may be used. In this case there are two different options:

Specify the number of processes per host, with the -npnode command line switch or the I2G_MPI_PER_NODE variable.

Use the -pnode or I2G_MPI_SINGLE_PROCESS to start only 1 process per host (This is equivalent to -npnode 1 or I2G_MPI_PER_NODE=1

For example, to start an application using a 2 processes per node (independently of the allocated slots):

or:

mpi-start -t openmpi -npnode 2 myapp arg1 arg2

In the case of using a single processes per host, the Open MP hook (defining MPI_USE_OMP=1) may be also used in order to let MPI-Start configure the number of OpenMP threads that should be started:

or:

mpi-start -t openmpi -pnode -d MPI_USE_OMP=1 myapp arg1 arg2

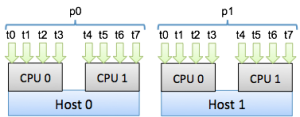

This is the typical case of hybrid MPI/OpenMP application. In the figure, an application executing in two different hosts with 2-quadcore CPUs is shown. Each host will have a MPI process (named p0 and p1) and one thread for each of the CPU cores available (from t0 to t7).

MPI-Start will define the OpenMP threads to 8 and start only one process at each host.

MPI-Start 1.0.5

MPI-Start 1.0.5 introduces more ways of controlling the processes placement. The previous options are supported, and 2 new more are available: start one process per socket and start one process per core.

MPI-Start assumes the whole host is allocated to the application execution in these cases!

Process per socket

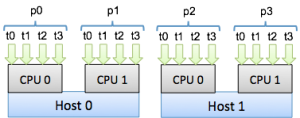

Start one process per CPU socket (threads would be started per each core in the CPU), with the -psocket option. As shown in the figure for two hosts with 2-quadcore CPUs, MPI-Start would start 2 different processes in each host and one thread per core.

The following example shows how to run an application using this feature:

or:

mpi-start -t openmpi -psocket -d MPI_USE_OMP=1 myapp arg1 arg2

Process per core

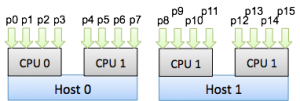

Start one process per core (no threads), with the -pcore option. The figure depicts this case

See the example on how to use this option:

or:

mpi-start -t openmpi -pcore -d MPI_USE_OMP=1 myapp arg1 arg2

CPU Affinity

MPI-Start 1.0.5 introduces a new hook for supporting CPU affinity in Open MPI as described in their FAQ. In order to enable it, define MPI_USE_AFFINITY to 1. You need to specify also per core/node/socket placement of the processes otherwise the hook will not be able to determine how to assign the processes to CPUs.

The assignment of CPUs to process is done in the following way:

- Per node: one process is assigned to all CPUs in the host

- Per core: each process is assigned to each CPU core

- Per socket: each process is assigned to each CPU socket

Do not run more than one application in the same nodes when using CPU Affinity. This can lead to a serious degradation in performance